- This event has passed.

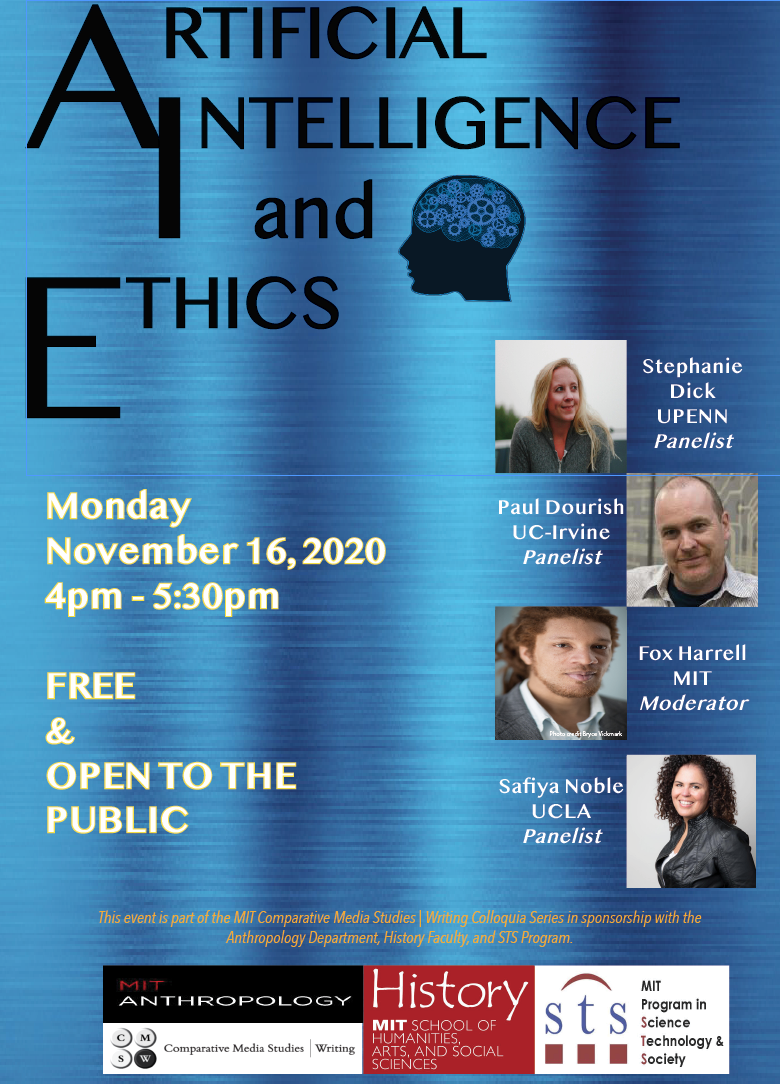

Artificial Intelligence & Ethics

November 16, 2020 @ 4:00 pm - 5:30 pm

Event Navigation

It turns out that it is not only social scientists and humanists who are raising concerns about the realities and directions of AI; many students and faculty in engineering are also voicing hesitations about all aspects of it, something few of us have seen with previous technologies.

Listen in as the panelists raise the right issues about broader currents in computer science, AI, and society.

Stephanie Dick, University of Pennsylvania

PANELIST

Stephanie Dick is an Assistant Professor of History and Sociology of Science at the University of Pennsylvania. Prior to joining the faculty, she was a Junior Fellow in the Harvard Society of Fellows. She holds a PhD in History of Science from Harvard University. She is a historian of mathematics, computing, and artificial intelligence. Her first book project Making Up Minds: Proof and Computing in the Postwar United States tracks early efforts to automate mathematical proof and the many controversies about minds and machines that surrounded them. Her second project explores the early introduction of computing to domestic policing in the United States, including databasing practices and automated identification tools.

ABSTRACT: In 1969, the New York State Police Department became the first in America to create a centralized and standardized computerized law enforcement database. NYSIIS – the New York State Identification and Intelligence System – was developed after the 1957 Appalachin Meeting of organized crime in New York State, at which Sergeant Edgar Croswell explained numerous “information circulation” bottlenecks and failures that had slowed his infamous Appalachin raid, which had resulted in over 60 arrests. He reported that some of the main suspects in his investigation were the subjects of “as many as two hundred separate official police files in a surrounding area of several hundred miles,” and called for more efficient and centralized file-sharing. The resulting NYSIIS system was heralded as a “scientific breakthrough” in policing that would allow improved objectivity and accuracy especially in the identification of individuals who had multiple encounters with law enforcement. However, like many supposedly “innovative technologies” NYSIIS was in fact very conservative, serving to reinforce a social order that subjected different parts of the population differentially to surveillance and policing. In this short presentation, I will describe the system, and turn quickly to the 1974 Congressional hearings that followed. The hearings were meant to investigate whether or not people’s rights were being violated by law enforcement databasing practices. However, their inquiries operated entirely within the logic of databasing, surveillance, and automated identification at work in systems like NYSIIS, never questioning its underlying vision of policing or the policed. I use this case to demonstrate how technical choices can foreclose legal, ethical, and political ones and how critique, when it happens on the terms of that which it critiques, can strengthen, more than check technical power.

Paul Dourish, UC-Irvine

PANELIST

Safiya Noble, UCLA

PANELIST

Dr. Safiya Umoja Noble is an Associate Professor at UCLA in the Departments of Information Studies and African American Studies. She is the author of a best-selling book on racist and sexist algorithmic bias in commercial search engines, entitled Algorithms of Oppression: How Search Engines Reinforce Racism (NYU Press). Dr. Noble is the co-editor of two edited volumes: The Intersectional Internet: Race, Sex, Culture and Class Online and Emotions, Technology & Design. She currently serves as an Associate Editor for the Journal of Critical Library and Information Studies, and is the co-editor of the Commentary & Criticism section of the Journal of Feminist Media Studies. She is a member of several academic journal and advisory boards, including Taboo: The Journal of Culture and Education.

D. Fox Harrell, MIT

MODERATOR

photo credit Bryce Vickmark

D. Fox Harrell, Ph.D., is Professor of Digital Media & Artificial Intelligence in the Comparative Media Studies Program and Computer Science and Artificial Intelligence Laboratory (CSAIL) at MIT. He is the director of the MIT Center for Advanced Virtuality. His research explores the relationship between imagination and computation and involves inventing new forms of VR, computational narrative, videogaming for social impact, and related digital media forms. The National Science Foundation has recognized Harrell with an NSF CAREER Award for his project “Computing for Advanced Identity Representation.” Dr. Harrell holds a Ph.D. in Computer Science and Cognitive Science from the University of California, San Diego. His other degrees include a Master’s degree in Interactive Telecommunication from New York University’s Tisch School of the Arts, and a B.S. in Logic and Computation and B.F.A. in Art (electronic and time-based media) from Carnegie Mellon University – each with highest honors. He has worked as an interactive television producer and as a game designer. His book Phantasmal Media: An Approach to Imagination, Computation, and Expression was published by the MIT Press (2013).